|

6/21/2023 0 Comments Trigger airflow dag from python

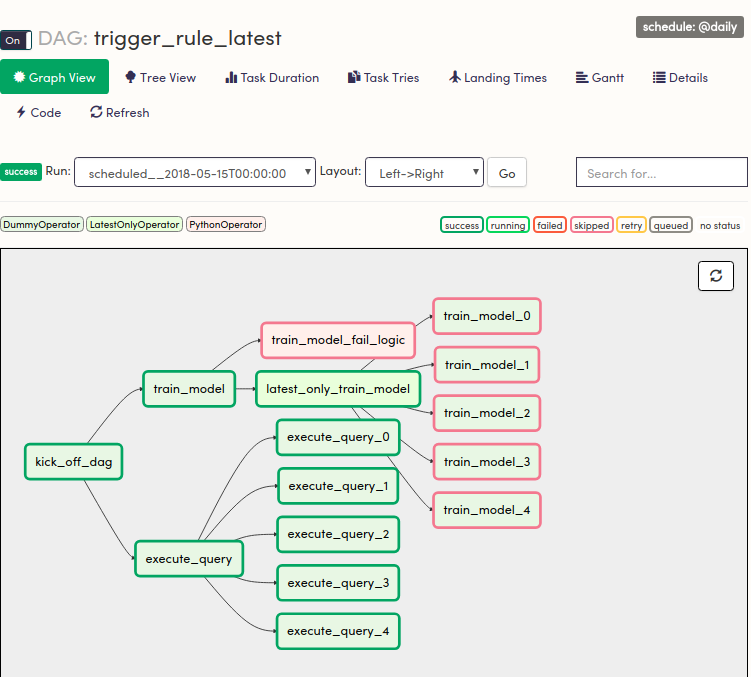

The SQLite database and default configuration for your Airflow deployment are initialized in the airflow directory. In a production Airflow deployment, you would configure Airflow with a standard database. TriggerDagRunOperator: Triggers a DAG run for a specified dagid priorityweight (int) priority weight of this task against other task. Initialize a SQLite database that Airflow uses to track metadata. Airflow uses the dags directory to store DAG definitions. Install Airflow and the Airflow Databricks provider packages.Ĭreate an airflow/dags directory. Initialize an environment variable named AIRFLOW_HOME set to the path of the airflow directory. 6 How to install dependency modules for airflow DAG task(or python code). This isolation helps reduce unexpected package version mismatches and code dependency collisions. How to trigger an Apache Airflow DAG using Google Cloud Functions + Node. The idea is that each task should trigger an external dag. Databricks recommends using a Python virtual environment to isolate package versions and code dependencies to that environment. Use pipenv to create and spawn a Python virtual environment. you want to execute a Python function, you have Airflow triggers the DAG. Pipenv install apache-airflow-providers-databricksĪirflow users create -username admin -firstname -lastname -role Admin -email you copy and run the script above, you perform these steps:Ĭreate a directory named airflow and change into that directory. Now, to trigger Airflow DAGs manually, two methods exist:The state of a DAG run. If you want to run the dag in webserver you need to place dag. Pass context about job runs into job tasks Home Core Concepts DAG Runs DAG Runs A DAG Run is an object representing an instantiation of the DAG in time. The python dag.py command only verify the code it is not going to run the dag.In this section, you'll learn how and when you should use each method and how to view dependencies in the Airflow UI. Share information between tasks in a Databricks job There are multiple ways to implement cross-DAG dependencies in Airflow, including: Dataset driven scheduling.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed